Trust and Intentional Stance in Mental Health Conversational Agents

A study of how conversation type and presumed message source shape intentional stance and trust in mental health conversational agents.

Chen Fang, Fu Guo, and Tony Belpaeme

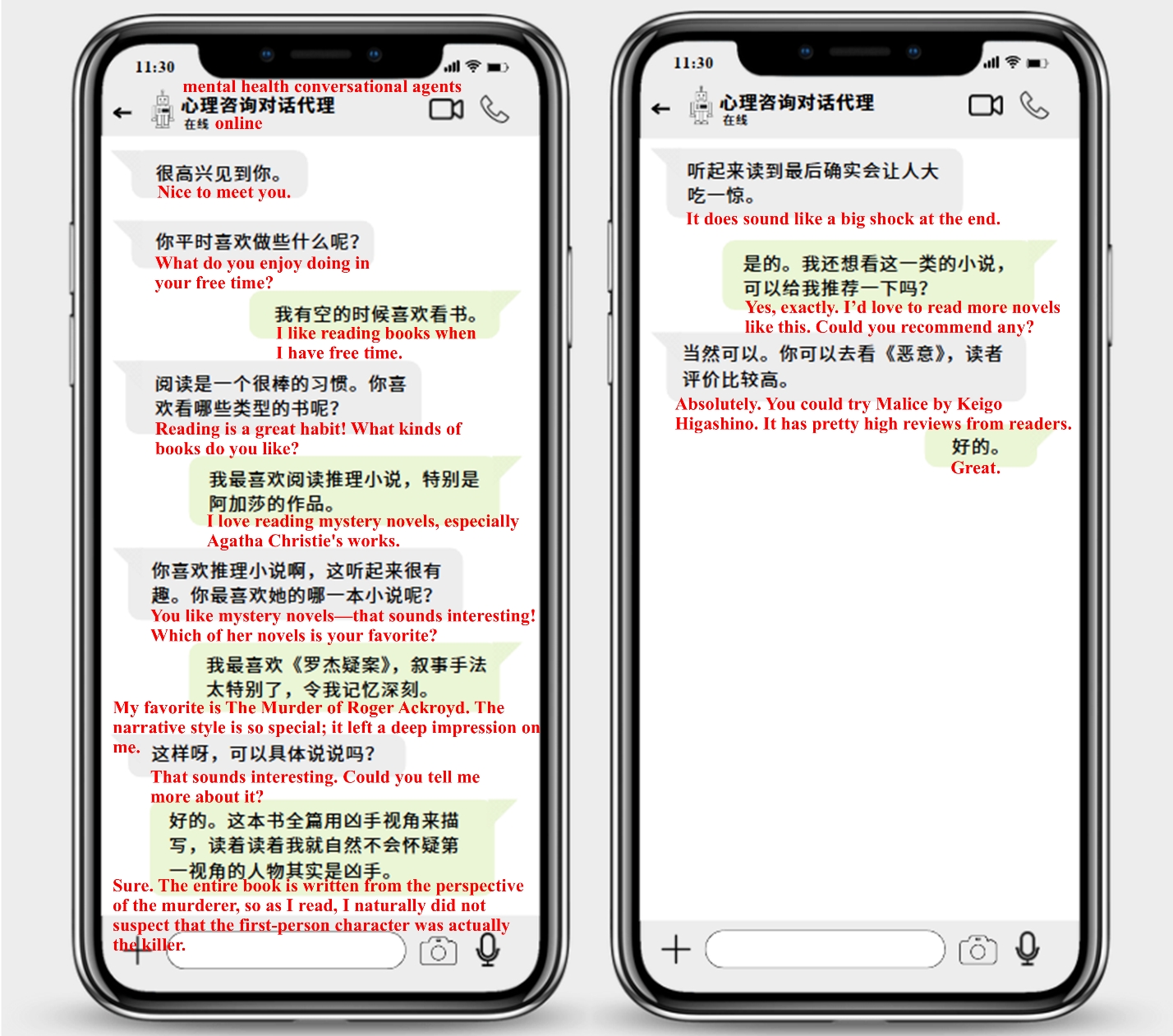

Mental health conversational agents are increasingly used for emotional support and stress relief, but the factors that shape user trust remain insufficiently understood. This project focused on how conversation type and presumed message source influence trust, and whether intentional stance mediates this relationship.

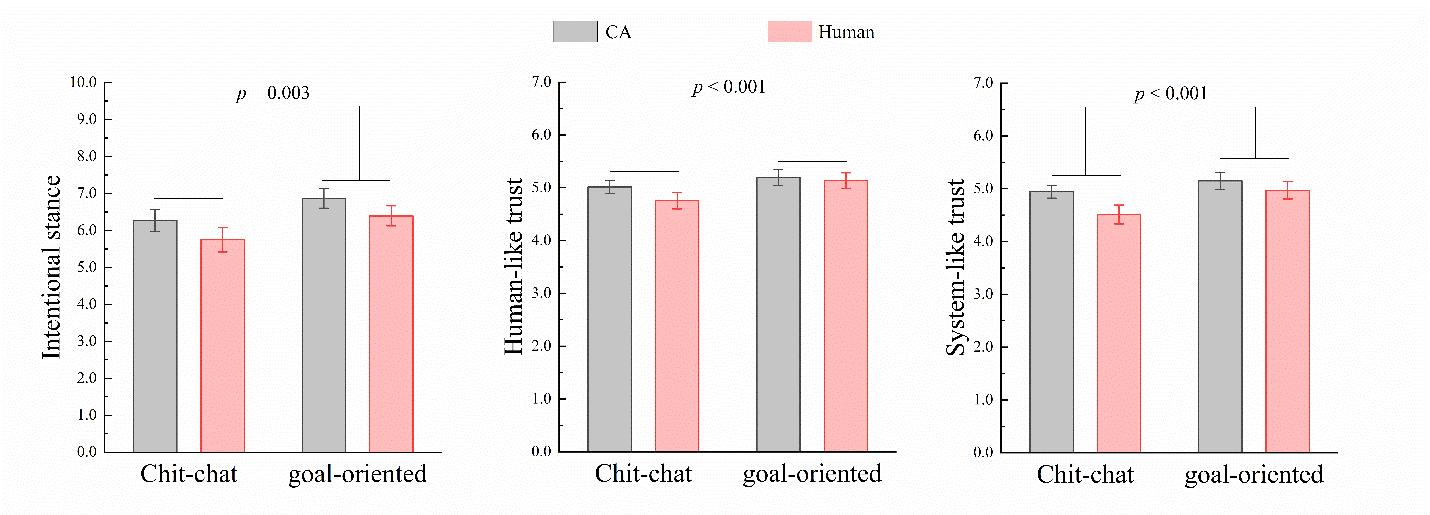

We first developed a questionnaire to measure users’ intentional stance toward mental health conversational agents, and then conducted a 2 x 2 mixed-design experiment. The results showed that conversation type significantly influenced trust, with intentional stance acting as a mediator, while presumed message source did not have a significant effect.

This work advances our understanding of how users attribute agency and form trust in conversational systems, with direct implications for the design of engaging and trustworthy mental health AI. The paper was accepted by IEEE RO-MAN 2025.